Task 2

Name: New technology-assisted methodologies in singing teaching/learning

Coordination: FEUP/ESMAE-IPP

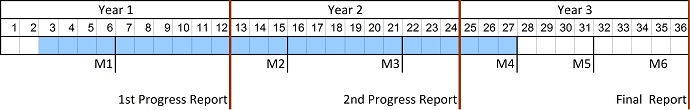

Duration: 25 months

Task description

The objective of this task is the design, realization and validation of interactive visual feedback technologies in singing as well as technology-assisted teaching, learning and training methodologies, particularly at beginner's level. Singer students and teachers from ESMAE (Prof. Rui Taveira), from UCP (Prof. Sofia Serra), and engineers will collaborate closely to enhance current singing learning, teaching and practicing methodologies with useful technologies providing objective visual feedback of singing expression, in addition to the natural auditory feedback. This combined and richer feedback of the singing voice will facilitate students to grasp better the subjective and objective dimensions of their singing exercises, which will make learning faster and more effective [Hop06]. In particular, the software environment should provide means to:

- detect objectively if the singing is out of tune,

- identify easily the vocal range profile of each singer and classify his/her singing voice register (e.g. bass, baritone, tenor, alto, soprano),

- visualize objectively the amplitude and frequency of the vibrato,

- visualize objectively the loudness of the singing voice,

- visualize objectively the continuity of the singing line (legato),

- visualize objectively the speed and quality of articulation,

- visualize objectively the metamorphosis of the vowels as a function of the fundamental frequency (i.e., as pitch increases, all vowels converge to the /a/),

- visualize objectively the dynamics and micro-dynamics of the singing voice (a property related to the style and musicality of the singing voice denoted for example by the variation and duration of the musical notes).

As a starting point, a software environment designed for singing visual feedback will be used that has been developed during the last four years under the coordination of the PI [SIS]. This software includes already competitive interactive functionalities involving the real-time visualization of the pitch of singing on a musical scale, detailed time and spectral representation, and pitch line to MIDI transcription (MIDI stands for Musical Instrument Digital Interface and consists in a protocol related to the symbolic representation of music). The results of TASK1 and also of TASK4 and TASK5 will be integrated in this environment, possibly after some re-design work in order to facilitate scalability.

In order to improve the interactive value of the visual feedback environment, whenever feasible from the point of view of signal processing, editing capabilities will also be added that will make it possible to transform the singing signal that has been recorded, in such as way as to correct or modify some desired expressive aspect, for example, to increase of reduce the vibrato extension. The capabilities will be available through an intuitive and easy-to-use touch screen menu of functionalities. Auto-scored singing exercises will also be supported by the visual feedback environment.

This task will motivate an intensive collaboration between engineers (FEUP) and singers (students and teachers at ESMAE and UCP) in order to validate the signal processing functionalities of the visual feedback environment, and in order to fine-tune and optimize the interaction and usability of its Graphical User Interface (GUI).

The GUI will be the object of careful design since the final users are mainly singing students, teachers and professionals. This means that the above goals can be successfully met only if the innovative solutions created as a result of the project operate in real-time, are non-invasive, operate with both running speech and singing, their utilization is intuitive, and if the GUIs are user-friendly. These constraints are quite severe and explain why the existing technological solutions for acoustic voice analysis for example, are regarded as disappointing by many voice clinicians [cost2103].

Expected results

The main results of this task are 4 reports (one report every six months), two journal papers, one international conference paper, and one prototype software environment.

Human resources: 37.65 person-month.